In this year’s ICML, some interesting work was presented on Neural Processes. In this blog post, I discuss what Neural Processes are and how they behave as a prior over functions.

I am a Machine Learning researcher with 10+ years of experience developing innovative AI/ML methods with demonstrated impact in both foundational research and real-world applications. With a background in probabilistic deep learning, my expertise spans generative models, Large Language Models (LLMs), and multimodal learning. Currently, I am a Research Scientist at Novo Nordisk, where I focus on leveraging LLMs and LLM agents for scientific discovery in biology. My recent work explores how LLMs can drive progress in cellular perturbation modeling and contribute to the broader goal of building virtual cells.

I did my PhD in Statistical Machine Learning at the University of Oxford, as part of the OxCSML group in the Department of Statistics, where I was supervised by Christopher Yau and Chris Holmes. Upon graduation, I had an opportunity to continue working with the Apple Health AI team, as well as spend some time in academia, in the Alan Turing Institute (as a recipient of the Turing-Crick Biomedical Data Science Award) and the Big Data Institute in Oxford.

News: Our work “LangPert: LLM-Driven Contextual Synthesis for Unseen Perturbation Prediction” was selected for an Oral Presentation at the MLGenX workshop at ICLR 2025

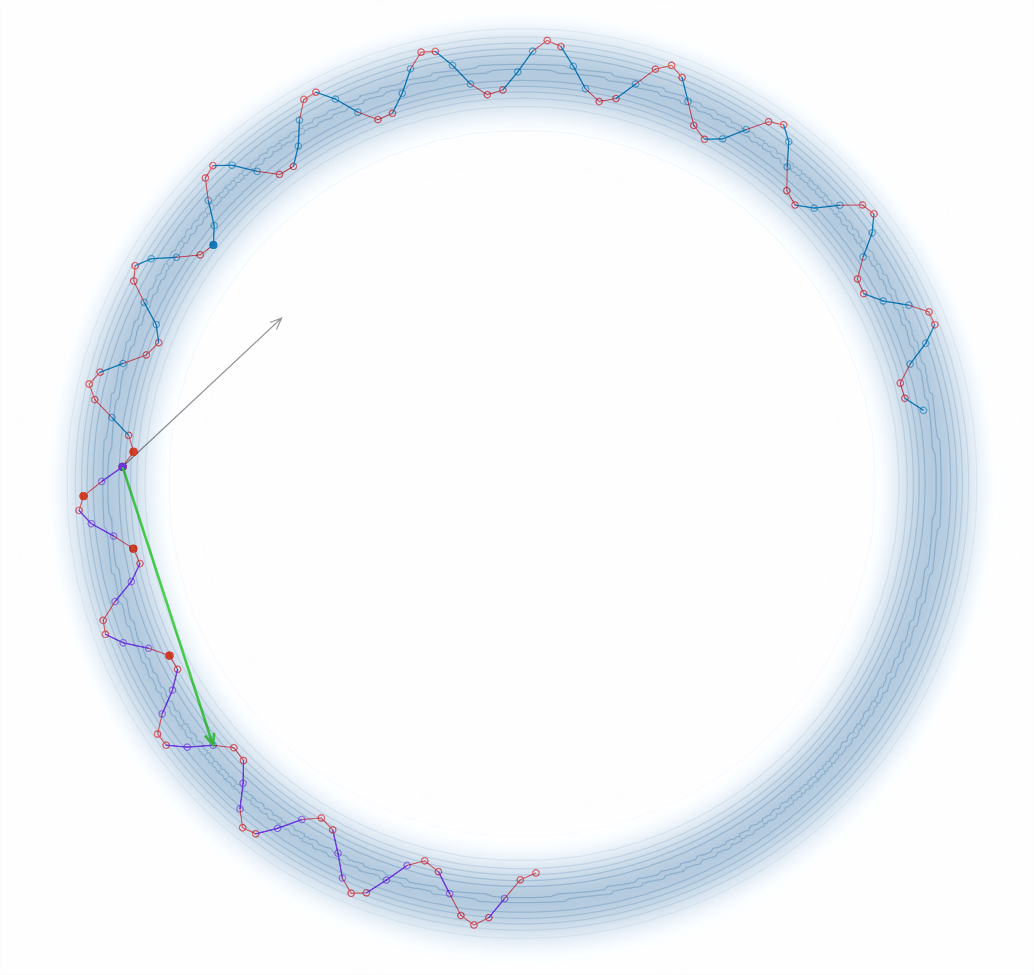

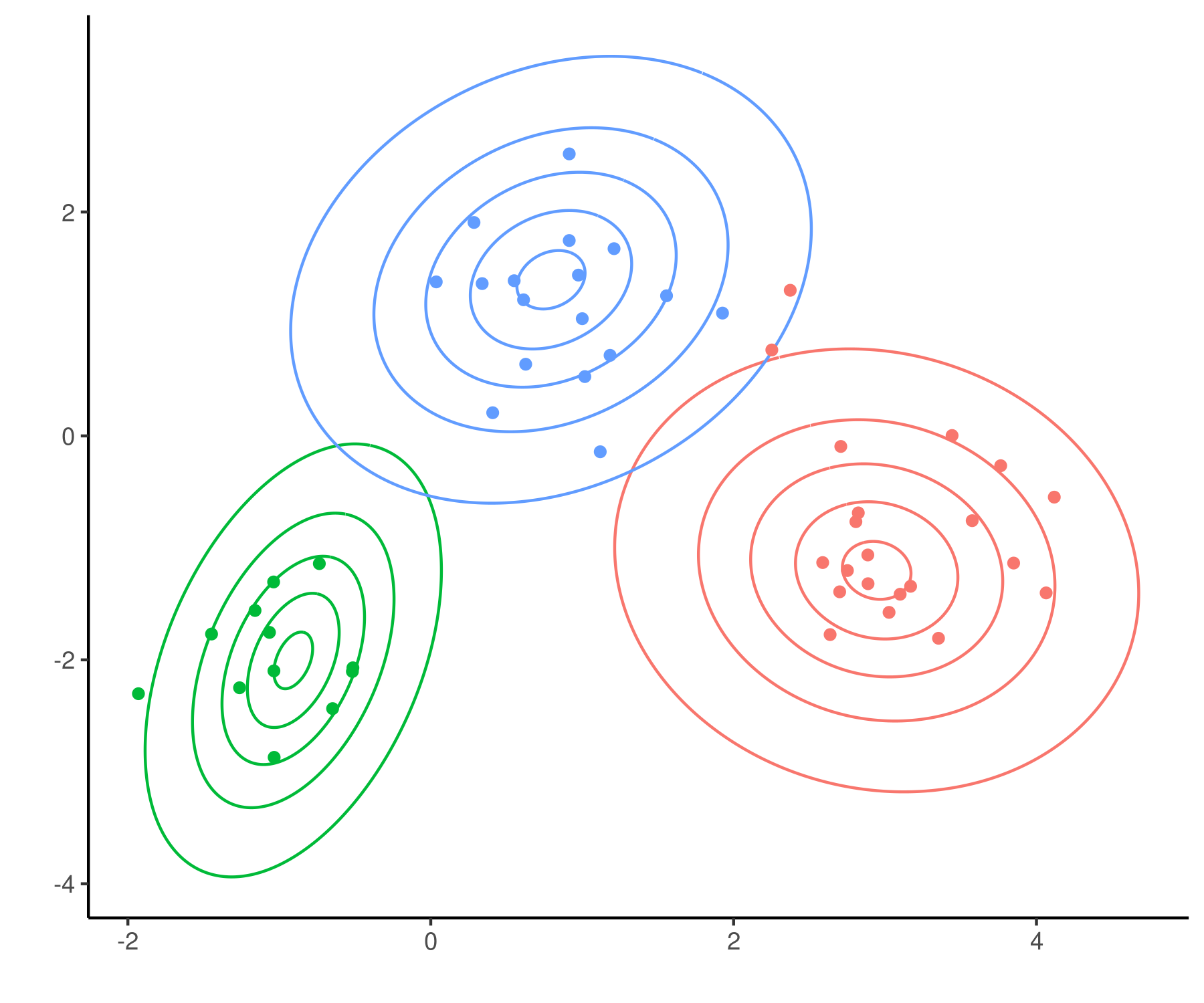

In this year’s ICML, some interesting work was presented on Neural Processes. In this blog post, I discuss what Neural Processes are and how they behave as a prior over functions.

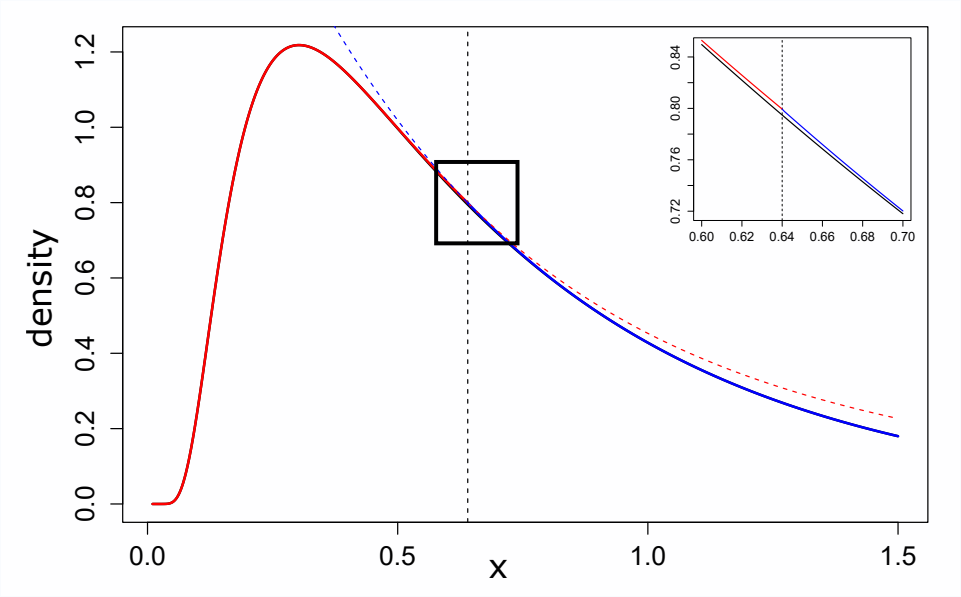

NUTS implementation in R

Rcpp implementation for DP and MFM mixtures

R package implementing the Polya-Gamma augmentation scheme

Here you can find course material (in Estonian!) on Data Science and Visualisation, which we created together with Tanel Pärnamaa. This course “Statistiline andmeteadus ja visualiseerimine” is centered around a number of interesting case studies, and it focuses on teaching good practices of data science in R by applying statistical methods to solve these real-life problems.